Cover image: Egyptian Musicians (Rawabí) and Almée by Ernest Benecke. Daguerreotype. February, 1852. Public domain.

The transition from mechanical reproduction to digital transmission and artificial generation reveals a history of music production rooted in cultural disruption and democratization.

Twenty-six years ago, I sat in a tiny second-story apartment at a meager audio production workstation cobbled together from a Compaq Pentium 2 PC, a used CRT monitor, old JBL stereo speakers, a TEAC amplifier, a TASCAM mixer, a pair of Alesis 3630 compressors and SM-57 microphones. I had a few guitars, a bass, a flute and a random assortment of percussion. It was primitive, but it unleashed capabilities that would have been impossible for me in the analog domain just a few years before.

But I wasn’t making music that day; I was writing a paper for a philosophy conference I was attending that summer.

I’d spent the preceding year reading works by critical theorists like Hannah Arendt, Herbert Marcuse, Walter Benjamin, Max Horkheimer, and Theodor Adorno – a group of WW2 German exiles in the U.S. who became known as The Frankfurt School. At the same time, a major technological shift was happening. Record companies had seen massive profits from CDs over the previous decade. The internet was blossoming and the innovations in decentralized file sharing networks meant users could easily copy and share retail releases, which threatened the future of the industry’s business model.

I stared at my screen as the theories of the Frankfurt School and the digital paradigm shift swirled and intertwined in my mind. I decided to write about the future of music in the age of the decentralized network we still often called the World Wide Web: the next logical phase beyond the age of mechanical reproduction and broadcast the Frankfurt theorists critiqued.

That paper is now buried somewhere in an attic, or lost to the ages, but I remember its ideas. As a Gen X musician who grew up during the peak of commercial music, I cut my teeth on analog multi-track recording systems, and migrated to digital by the end of the 90s. I like to think my generation has a unique perspective on that historic transition, having lived through the eras of records, cassettes, CDs, and MTV as children and young adults before the emergence of the hyper-connected world of today.

Humans alone find pleasure in music. This is believed to be the result of millennia of evolution as bipedal travelers who communicate vocally. Our nomadic lives involved repeated footfalls in groups, providing haptic feedback to our nervous systems. We carried our young as we walked until they were fast enough to keep up. This gradually evolved into a feeling of safety, comfort, and eventually pleasure separate from the physical motion.

Music held more cultural weight in the 20th century. The barriers to manufacturing, distributing, and marketing music were immense. By 2000, I’d already spent a decade chasing that dream and failing. Recording and distributing professional quality physical media cost thousands of dollars in the 90s. Promoting that music through touring, magazines, radio, and TV required even more.

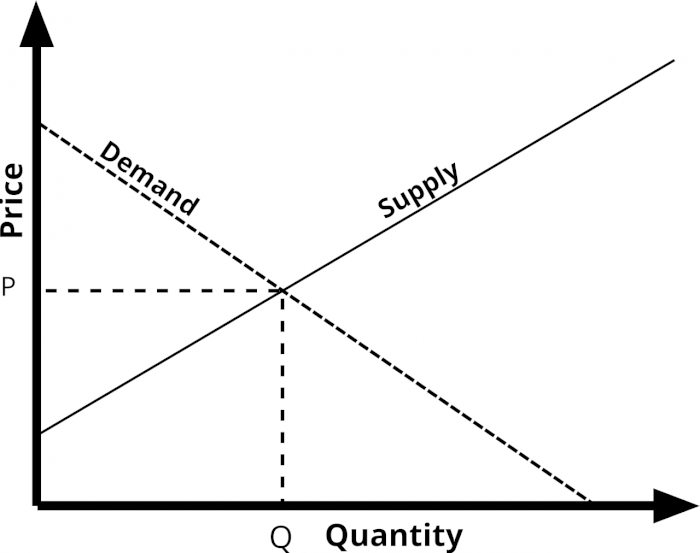

Record labels acted as the gatekeepers with a strong economic incentive to control the supply and demand of music. They knew the average consumer had limited time and money to spend on their products. Radio, television, and print could only cycle through so many catchy songs and artists per year. The popularity of the stars they helped create drove magazine and record sales. Terrestrial broadcast radio was expensive to operate. It was the way people discovered and enjoyed music. Radio stations repeated the same songs because listeners preferred familiarity. Many stations played the same songs for decades.

All of this created a shared, homogeneous music culture. Most people aged 10 through 30 in the US from 1950 to 2000 knew the top ten hits of any given year. Everyone was listening to the same thing. This shared experience unified culture. It gave young people a common language and collective identity. We all watched the same music videos and heard the same anthems. This crossed social boundaries and defined the spirit of the era.

The Frankfurt theorists argued that this homogeneity had a dark side. They believed that when art is treated like a product on an assembly line, it loses its power to challenge the status quo. Because the gatekeepers controlled the airwaves, they could dictate what was considered good or relevant. This created a feedback loop where the audience only liked what was familiar, and the labels only provided what the audience already liked–which seems oddly quaint today compared to the new era of algorithmic media. Our shared identity was essentially a manufactured commodity.

Horkheimer and Adorno argued that mass-produced art and entertainment turned individuals into passive consumers rather than active, critical thinkers. By creating a false sense of satisfaction, the culture industry distracted the masses from the realities of their economic and social conditions. They felt that true art should be difficult and challenging. Mass culture, by contrast, was designed to be easily digestible, predictable products that reinforced the values of the ruling capitalist system. This cycle prevented people from imagining or demanding a different, more liberated way of life.

Even in 2000, I questioned those ideas. Mechanical reproduction was democratizing. Prior to recordings and radio, access to professional music performance was limited to the wealthy. Traditional folk music was familiar, comforting and repetitive. The popular folk canon of any given culture often remained consistent for generations, even centuries. Artists in the 20th century wrote and recorded the songs that people wanted to hear, because they wanted to break even on the cost.

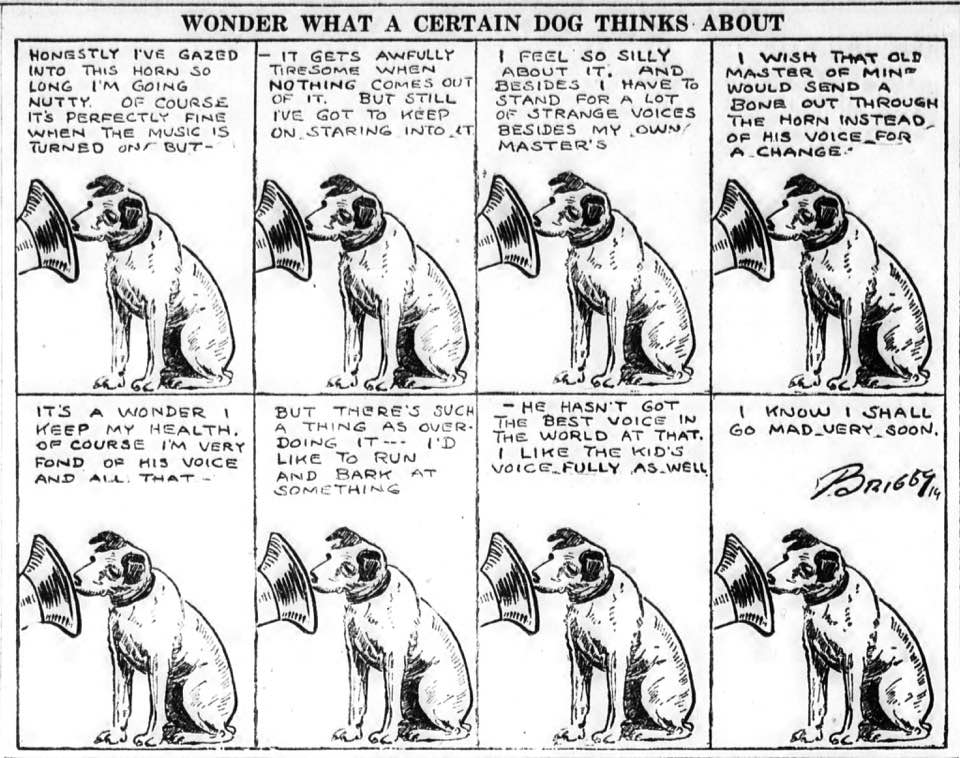

In my 2000 paper, I traced the role of the musician back before the age of mechanical reproduction, when music was an ephemeral, lived experience performed by peoples as a vital component of liturgical ceremonies, seasonal celebrations, or as a simple reprieve after a day of manual labor. A household might own a single stringed instrument, a fife, or a drum, reserved for special occasions. The exceptionally talented occasionally ventured beyond their villages as traveling troubadours or members of theatrical caravans. To be a musician was to be a nomad, trading one’s virtuosity for a meager living on the road.

It was only with the advent of mechanical reproduction that music was transformed from a lived social relation into a commodity that could be objectified and experienced in a completely novel way that we take for granted: exact infinite repetition—an event loop. A momentary sound could be enshrined. Music and spoken word were immediately the most vital and popular content for mechanical media, carrying dense and culturally rich information. As our technical capacity to capture and synthesize sound accelerated, the historical barriers to entry began to crumble.

This progression follows a clear arc of democratization through automation. The player piano and the drum machine automated the physical dexterity of the performer. The tape machine, synthesizer, sequencer and the digital audio workstation eventually collapsed the architecture of the recording studio. Each innovation chipped away at the wall between the amateur and the professional, steadily transferring the power of creation from a specialized few to the average person with an impulse to express themselves. Every leap in technology has dissolved the technical requirements of the craft while expanding the horizons of who is permitted to create.

At each stage where these barriers lowered, the volume of music media surged. The supply-demand curve for artistic works reached a peak of commercial optimization in the late 20th century, when the industry had perfected the art of selling the same few sounds to everyone at once. With the dawn of the internet, cracks began to form. Yet, even five years ago, some residual friction remained. To perform, capture, and polish a recording still demanded a significant investment of time and a mastery of specific technical skills. These lingering requirements acted as a final set of constraints on the total supply.

With the advent of AI generation, the barriers are gone. Even before AI, approximately 100,000 to 120,000 new songs were uploaded to Spotify daily. Independent artists have compared releasing music to urinating in the ocean. Suno, the leading AI music platform, generates a staggering 7 million songs per day, which is enough to replicate Spotify’s entire 126-million-track catalog every two weeks. In this saturated landscape, all that remains to distinguish a specific signal from the deafening noise is a chaotic mix of taste, momentum, chance, talent, or the brute force of a massive marketing budget.

AI now generates high-quality, finished products in minutes—a feat that would have taken the most efficient human producers hours just a few years ago. The “virtuosity” of these artificial performers often exceeds that of the seasoned professional. We’ve reached a point where the machine can simulate technical perfection and emotive nuance with a speed and consistency that no biological entity can match.

The AI music creation platforms themselves are so good that they reframe the paradigm of both production and consumption. While many professionals integrate them into their existing workflows, for most users, the platforms are personal playgrounds where they interact via simple prompting or use it as a songwriting tool to process audio and textual ideas into fully produced works, all in a matter of minutes.

Without the old supply-demand dynamics of professionally produced products and distribution, the very notion of discovery and consumption is flattened into a completely novel interactive experience. One doesn’t choose new music as much as magically wish it into existence. As wistful creative concepts are immediately actualized with a “one-shot” prompt, it allows for the unprecedented potential of highly polished ideation. A person with a unique idea who never dreamed of generating music is suddenly freed from the constraints of performance and production.

The developers of platforms like Suno understand the unit economics and network effects are so distinct from prior norms that their business model is more like a massive multiplayer online game than a music production tool. It’s more Minecraft than iTunes. Most users will build personal worlds and share them with a limited network of others. The volume of audio output is so immense and sonically homogenous that its potential beyond its borders is limited. According to research by the AI platform Neume, nearly half of all AI-generated songs are never played. The median song receives a single play. Over 97% of songs receive fewer than 50 plays. And fewer than 0.01% of songs ever achieve meaningful traction. Deezer reports that 28–39% of daily uploads to streaming platforms are AI generated, but account for only ~0.5% of actual streams.

AI music is convincing. According to a major global study conducted by Deezer and Ipsos in late 2025 involving 9,000 participants, approximately 97% of people could not distinguish between fully AI-generated tracks and human-made music in blind listening tests.

However, studies show that frequent listeners and professionals learn to perceive it, recognizing spectral artifacts, a characteristic softness in percussive sounds and a static, sterile quality in instrumentals. Beyond audio quality, they recognize structural patterns and compositional tropes. The current generation of AI executes transitions and key changes with a precision that feels unnatural, and its vocal synthesis frequently utilizes a predictable style of melodic gliding. Through repeated interaction, the uniqueness of the output fades.

The consensus among frequent users of platforms like Suno and Udio is that they eventually encounter a plateau of homogeneity. Because these models generate audio based on the most probable patterns within a genre, the results often sound like a blurry average of their training data. The patterns become familiar and share predictable structures, standard melodic progressions, and a certain tonal flatness.

While AI generated music models can introduce randomness through technical settings like temperature, it differs from human inventiveness. Human production is often divergent and subversive, containing micro-timing imperfections and unique structural choices that model training averages out. While current gen AI is great for rapid prototyping and generating radio-ready foundations, it struggles to replicate the subtle attributes that are typical among human creators.

A survey conducted by The Hollywood Reporter and the University of Miami’s Frost School of Music found that a majority of Americans remain skeptical of AI-generated music, with 52 percent of respondents expressing no interest in it. While Gen Z showed the most openness to AI-driven creativity, the study indicates a broad consensus that original artists deserve compensation when their styles are replicated. Majorities in all age demographics aren’t yet sold on the idea that AI songs should be created at all without human musical contributions. A September 2020 Pew Research poll found that 53 % of U.S. adults think AI will worsen people’s ability to think creatively.

But subjectively, it turns out that people like AI pop music. A 2025 study by Chia, Hartanto, and Tong found that listeners rated pop songs more favorably when they were labeled as AI-generated rather than human-composed. Despite the music itself being identical and the labels being randomized, participants reported higher levels of positive emotions, such as happiness and energy, for the tracks they believed were created by AI. These findings contradict typical expectations of a bias against machine-made art, suggesting a shift in how audiences perceive and value AI creativity.

Thus, we are left with a few remaining outposts of the authentically human. We find value in the comedic or the surreal, those creative ideas that serve as a digital funhouse mirror. We turn toward the live event, the physical performance that is unquestionably human, where the risk of failure and the presence of a breathing body become the primary draw. We develop an ear for those intentional, soulful imperfections we subconsciously detect as human as we begin to map the predictable, polished patterns of the artificial.

Some artists are transitioning toward a revival of radical abstraction to differentiate. Much like the modernist art movement was forced to abandon realism when confronted by the camera and lithographic print, today’s producers are embracing the strange and the difficult. We see this in the rise of “maximalist” hyperpop and EDM, or in the resurgence of complex, polyrhythmic jazz in England that defies algorithmic prediction. Just as painters like Pollock or Rothko responded to photography by stripping away the subject to reveal the raw medium, musicians are beginning to prioritize the extreme, the dissonant, the accidental, and the overtly imperfect. We’re also seeing a shift toward “process art,” where the story of the physical struggle, the specific local context, or the unique technical limitation becomes as important as the product.

Twenty-six years ago, I migrated from the painstaking labor of linear analog tools like magnetic tape to a digital world where I could slice and dice dozens of organic and synthetic sound sources at will. It allowed me to present a version of music that was highly stylized and curated. The sample works on this blog represent the culmination of that specific discipline: playing, recording, and editing media into what are essentially complex and often painstakingly crafted sound collages.

In this sense, the arrival of AI does not represent a sudden break with the past, but rather the logical evolution of a long process of virtualization. We’ve spent decades using digital tools to make music that is perfectly on pitch and perfectly in time. We can hardly be surprised when the machines learn to cut out the middle “man”. This saturation of the “perfect” creates a renewed appetite for the “real.” We may reach a point where the value of art will no longer be found in its technical flawlessness, but in the irreducible evidence of a human hand behind the work. The breath, the hesitation, the slightly out-of-tune string are the markers of authenticity. When everyone is using a beauty filter on their selfie photos, an honest portrait by a great photographer becomes all the more impressive.

I put in my 10,000 hours as a live musician and producer, yet I’m acutely aware that my performances remain stubbornly flawed. This is despite twenty-five years of relentless struggle to align myself with the digital click track. Thirty years ago, my sense of time and meter was entirely organic, a living pulse developed through the shared experience of ensemble play. Today, the musicians I encounter in the studio and on stage are different. Their internal clocks are forged by years of metronomes and in-ear monitors. As a result, many have achieved a level of technical precision that was once the stuff of legend. They can sing in perfect pitch or play with a temporal accuracy that rivals a microprocessor.

The “imperfections” we once viewed as obstacles to professional polish are now the things that distinguish a work from a prompt-generated file. The struggle against the click track was the tension created by a human heart trying to beat in sync with a crystal oscillator. That tension is where the art actually lives. It’s where a singer bends into a note with raw emotion or a rhythm ebbs and flows like a tide. These are the sonic signatures of unique human DNA vibrating in a specific slice of time and space.

For the older audiophile raised on analog music performed by people, the timbre of a living organism is inherently original. A human voice is a signature of the specific geometry of a person’s bones, the tension in their respiratory system, and their state of their mind. When human flesh meets strings, sticks, or keys, it creates a unique event that can never be truly replicated. Even the air in the room makes the moment singular; a microphone captures a space that is constantly shifting based on the temperature, the humidity, and the movement of the bodies within it.

Music made by gathered humans is a sequence of points on an event horizon. It is a constellation of variables. It’s what the performers ate that morning, their relative health, the emotional weight they carried into the session, and perhaps even their physical orientation relative to the earth, moon, sun and stars. Those sound signatures remain beyond the reach of the algorithm, and they now have greater value. This realization draws me back to the same convictions I held twenty-six years ago, during a time when I was possessed by the spirits of jazz, blues, folk, and fusion. These were not merely genres; they were hyper-human events, singular collisions of intent and accident captured in the amber of recorded media.

In the tradition of jazz music, which is often captured in a live take, the music exists in the micro-adjustments between players—the way a drummer spaces notes based on cardiovascular pulse or how a bassist leans back against the beat to convey an sense of groove and swing. They are the result an embodied physical existence, a specific social tension, a dialogue between individuals who are risking something in real time. Some people find this tension challenging and unsettling. Others find that, once initiated into the experience, modern hyper-produced pop music sounds like a lifeless caricature of itself. Many, myself included, consider works like A Love Supreme a sacred artifact.

When we listen to old recordings, we’re eavesdropping on a historical moment that can never happen again–a ghost of a physical reality. AI can simulate the “style” of a jazz record, but it cannot simulate the risk of the performance or the weight of the history that led to that specific improvisation. By returning to these human-centric forms, we’re asserting that the value of art lies in the fact that a person was there, in a room, breathing, struggling, and reaching for something that wasn’t there a moment before.